About the EVERTims project

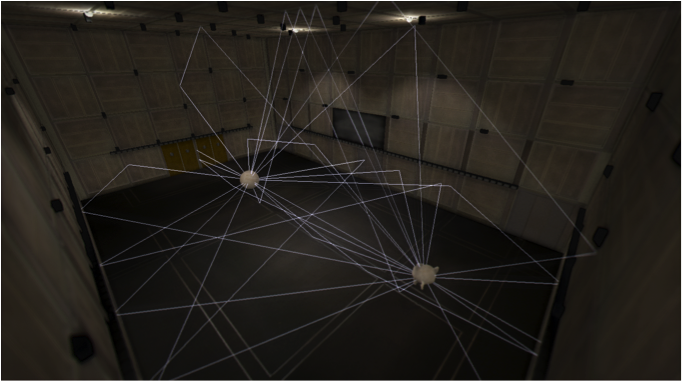

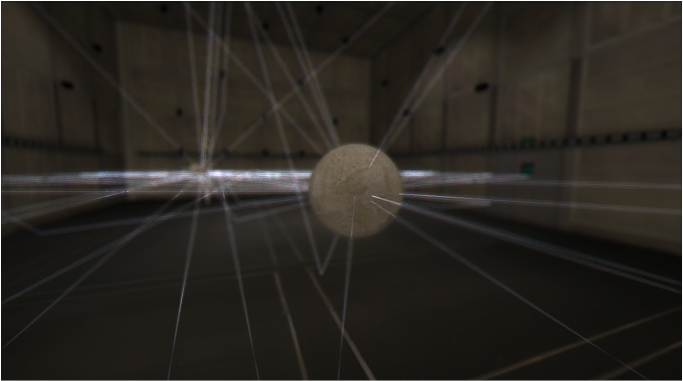

EVERTims is an open source framework for 3D models auralization, providing real-time feed-back on the would be acoustics of any given room during its creation.

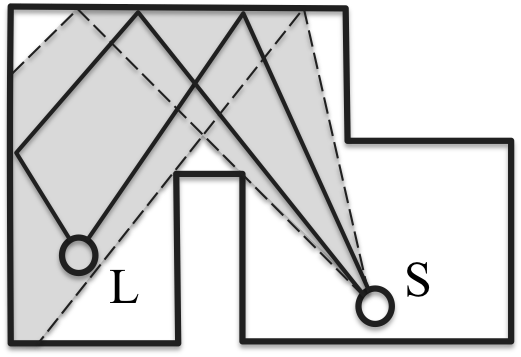

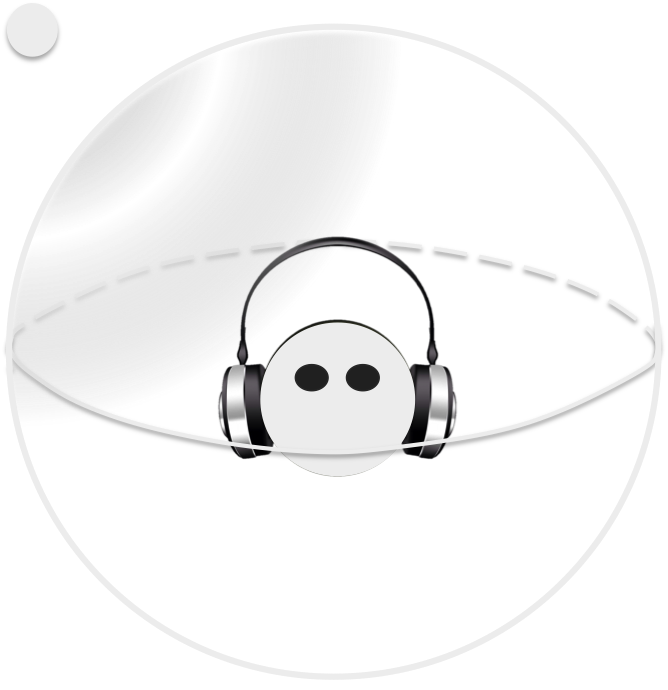

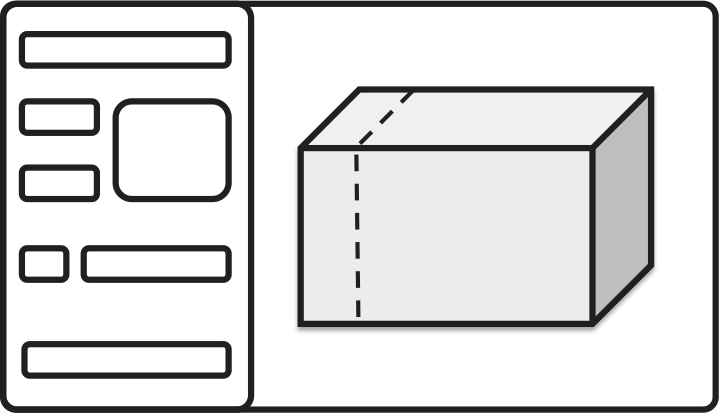

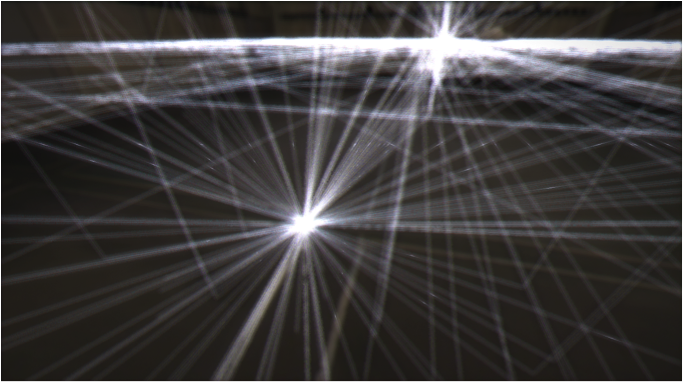

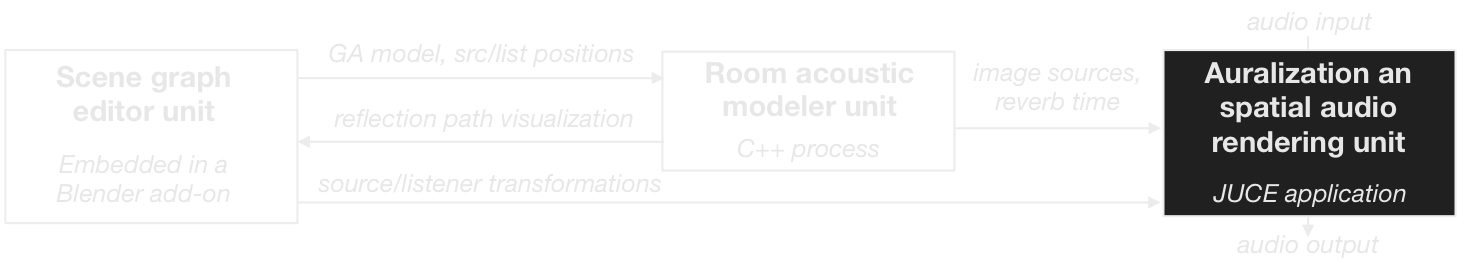

The framework is based on three components: a Blender add-on, a C++ raytracing client and a JUCE auralization engine. While designing the 3D room model in Blender, the add-on continuously uploads geometry and materials details to the raytracing client. Based on these information, the client simulates how acoustic waves propagates there, from sources to listeners objects positioned in the Blender scene. The results of this simulation are then sent to the auralization engine that reconstructs the Ambisonic sound field as experienced at any given listener's position for binaural listening. The framework also takes advantage of the Blender Game Engine to support in-game auralization for a final interactive exploration of the designed model.

EVERTims was originally developed as a collaboration between LIMSI/CNRS and the TKK/Department of Media Technology. It has now become a joint effort between researchers there and at the IRCAM institute.